UX Case Study: Modular Content System

Context:

Thoropass's compliance management platform guided clients through the implementation of security frameworks like SOC 2, ISO 27001, and HIPAA. Small clients might only need one of these security frameworks but as Thoropass moved upmarket, larger clients often wanted to implement and manage several security frameworks at once.

Unfortunately, the expert content in Thoropass’s platform was written and released for each framework individually, sometimes months or even years apart. If a client purchased more than one framework, they would be forced to see multiple versions of their content, including instructions that often conflicted with each other.

Depending on their combination of frameworks, clients could see up to 80% redundant content, leading to duplicated work and serious confusion.

Understanding the problem:

We conducted user interviews with key customers and our own customer service managers to determine how deep the problem was. Some of our key insights:

The existing experience was so confusing that many users didn't even realize it was happening to them. They just thought the platform was bugged and would mark "duplicate" instructions done without looking deeper.

Thoropass touted itself as smart platform, and users expected to see one piece of instruction per topic, covering all their frameworks.

Our customer service managers would, when feasible, go into the customer's environment themself and mark off as many tasks as they could to ease the burden on users.

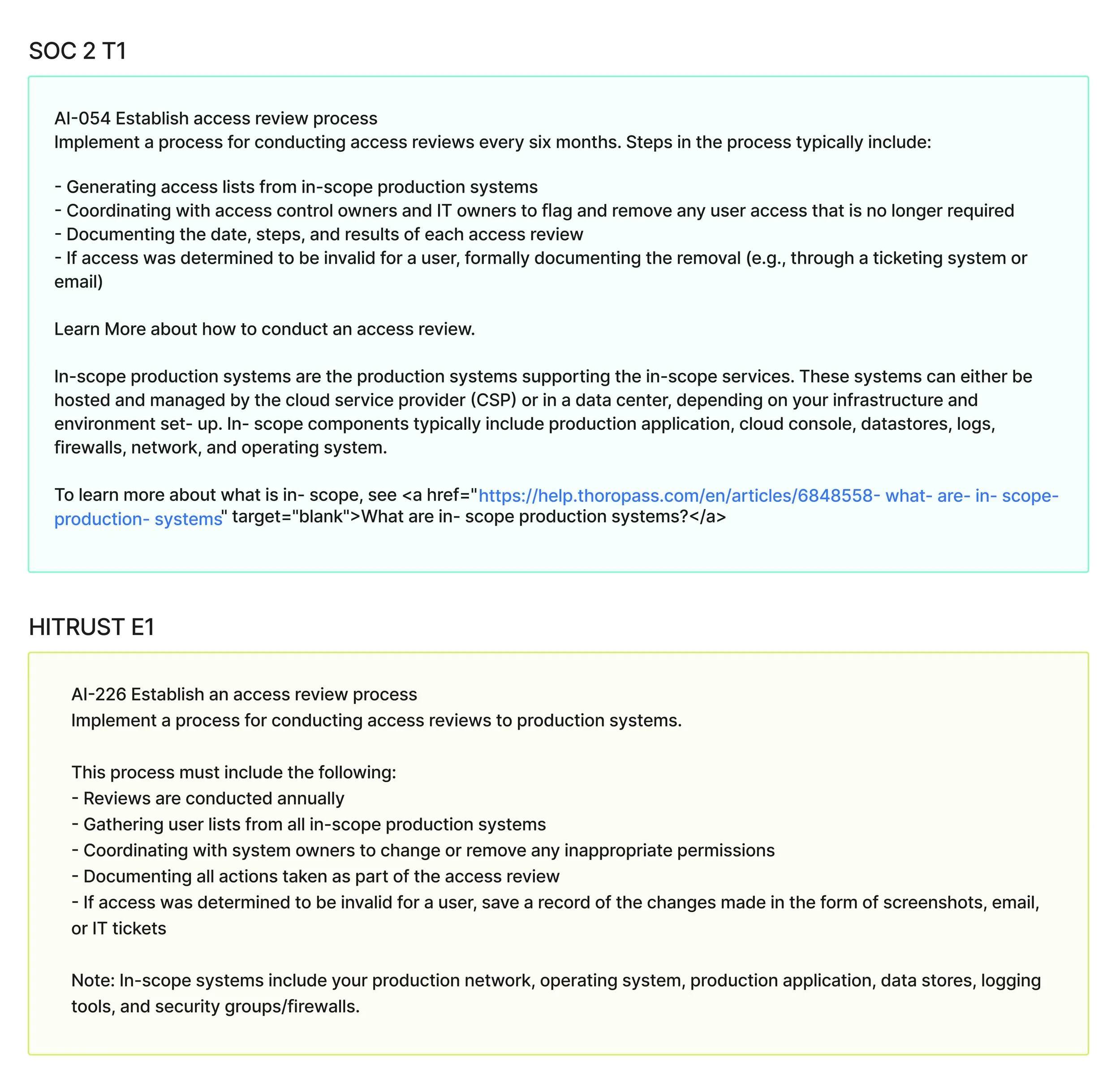

The issue of duplicate content was everywhere in the app. For just one example, when clients with both SOC 2 and HITRUST content needed to conduct access reviews (access reviews are the process of validating that users have the correct access to resources in your company), they would see these two pieces of instruction:

Expert content for SOC 2 and HITRUST for the same task.

Confused? You’re not the only one.

Where does the user start? What’s the difference between these pieces of content? It was unacceptable to force the user to compare and contrast multiple sets of instruction before they could start their most essential tasks.

Now imagine this issue repeated across a dozen frameworks and hundreds of topics. It was, for some clients, a very understandable dealbreaker.

Choosing our requirements:

We needed to design a unified system that displayed accurate content no matter what products the client purchased. I was tasked by my product manager to design a content system that would meet the following requirements:

Programmatically arrange content for the user depending on their frameworks

Understand where multiple frameworks conflicted and know how to reconcile those conflicts

Be extensible, something that could be built upon when implementing future frameworks

Use our existing content as much as possible

Analyzing our existing content:

One of my first tasks was to analyze our existing content to inform our plan and the results were promising. Due to content guidelines that I had implemented the previous year, much of the content was already following common sense patterns.

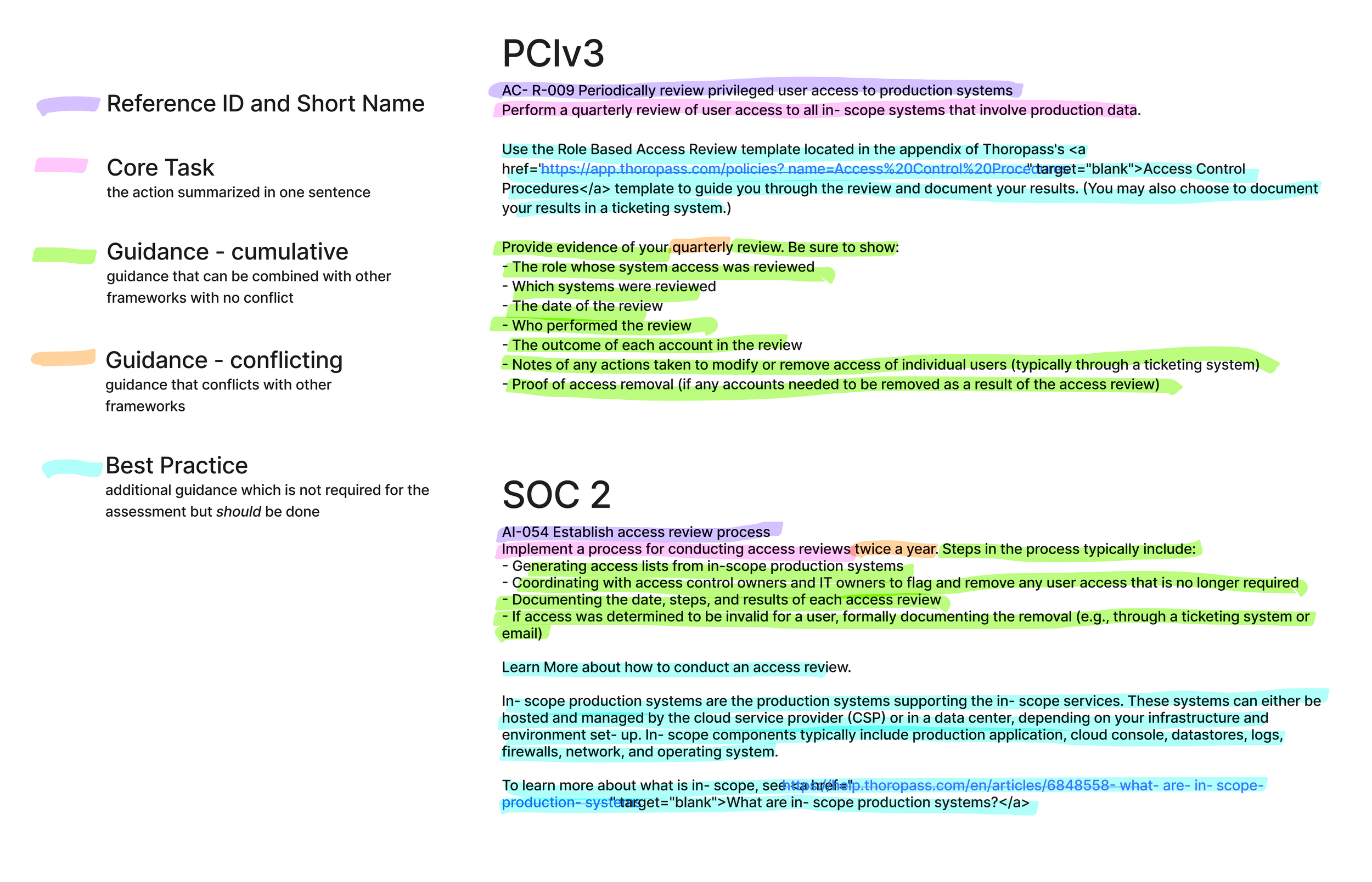

Element breakdown of pre-project content.

If you look closely our examples above, you might notice that although the instructions look very different from each other, they mostly agreed on what goals to accomplish. In the case of access reviews for example, all frameworks required that you:

Periodically perform reviews

Review every system being audited

Document the process

Instructions may disagree on the specifics of these goals (Are emails acceptable as evidence of the change, or is it an IT ticket required? Does the review need to occur quarterly or biannually?) but these differences can be smoothed over with the right use of language.

When frameworks are more stringent or conflicting:

When the frameworks agreed on goal and execution, the work consisted of standardizing and applying the language. Time-consuming but straightforward.

But what about when the frameworks didn’t agree? How do we represent that information?

In some cases, a more rigorous framework may have additional requirements on top of the baseline. For example, to generate access lists for physical security badges in addition to digital systems. I began to refer to these as cumulative requirements, items that could simply be added on top of each other without issue.

In other cases, two frameworks may have mutually exclusive requirements. Common examples would be frequency, such as requiring that a certain activity occur annually, biannually, or quarterly, or the strictness of a requirement, such as one framework requiring passwords to be minimum 10 characters in length and another requiring 14 characters minimum.

I referred to these as conflicting requirements. These always existed on a spectrum from least strict (e.g., annual testing) to most strict (e.g., monthly testing). When these occur, we need to place them on the spectrum to determine when they should be displayed.

Early designs:

As we did lo-fi exploration of our schema requirements, we kept in constant contact with our engineering team to understand what was possible, as well as updating our subject matter experts to gauge the upcoming effort of translating all of our content.

Early explorations led us to a model of composability we called LEGO, where composable blocks of content could be stacked together in the correct order in a simple arrangement of <p> and <li> html tags.

The long sprint of execution:

After a proof of concept was approved by our stakeholders, I was personally tasked with writing the MVP of our schema using our two most popular frameworks, SOC 2 and ISO27001.

This schema had to be extensible, or able to be built upon, so it was important that I move to a third framework as fast as possible. Additionally, my wife was pregnant and my paternity leave was scheduled, leaving me with 6 weeks to transform ~45,000 words of dense SME content into our MVP, as well as hire and train a contract content writer to maintain it while I was away.

Below is an image of one composable “action item”, or set of instructions, as it was launched. Over 150 such action items were produced for the MVP.

The result:

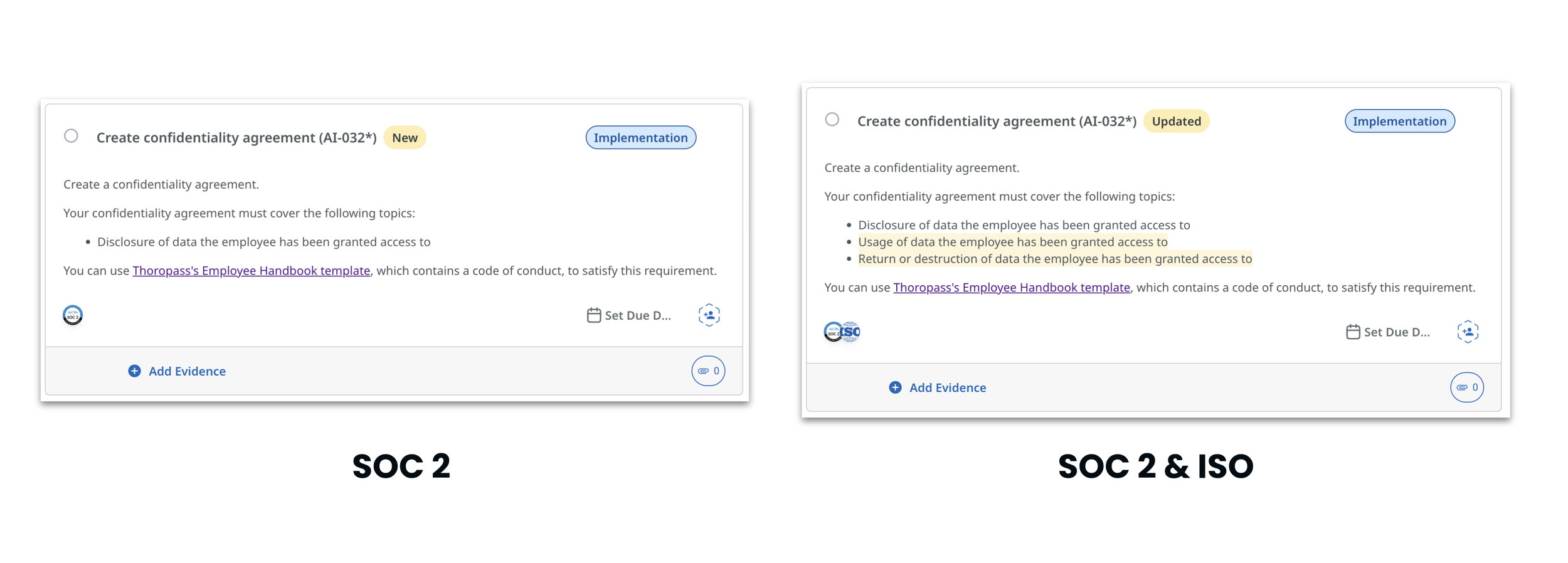

We launched our all-in-one content solution on time, one that programmatically created the content our users expected to see. Below is an example of the new, unified set of instructions, including the highlighting system used to indicate changes to the user’s content when new products are added.

When a change is this significant and wide-reaching, it can be difficult to accurately measure the impact. While we can’t be certain this was the only cause, after launch we saw an overall reduction in average organization time spent on tasks by 30+% and a 14% reduction in audit loops (user-caused errors during the audit process) for affected customers.